| title | Configure SQL Server container in Kubernetes for high availability | Microsoft Docs |

|---|---|

| description | This tutorial shows how to deploy a SQL Server high availability soluion with Kubernetes on Azure Container Service. |

| author | MikeRayMSFT |

| ms.author | mikeray |

| manager | jhubbard |

| ms.date | 01/02/2018 |

| ms.topic | tutorial |

| ms.prod | sql-non-specified |

| ms.prod_service | database-engine |

| ms.service | |

| ms.component | sql-linux |

| ms.suite | sql |

| ms.custom | mvc |

| ms.technology | database-engine |

| ms.workload | Inactive |

[!INCLUDEtsql-appliesto-sslinux-only]

In this article, you will configure a SQL Server instance on Kubernetes in Azure Container Service (AKS) with persistent storage for high availability.

This tutorial demonstrates how to configure a highly available SQL Server instance in containers using AKS.

[!div class="checklist"]

- Install kubectl

- Set up the cluster

- Configure storage

- Create a stateful set

- Connect to the container with SQL Server Management Studios (SSMS)

- Verify failure and recovery

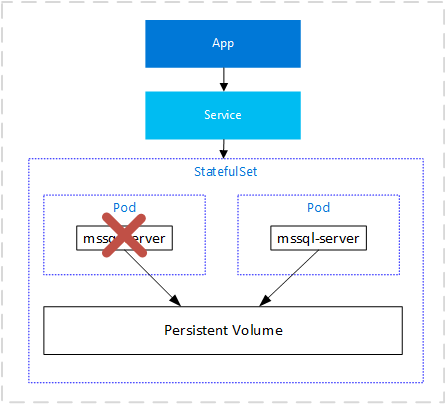

Kubernetes 1.6+ has support for Storage Classes, Persistent Volume Claims, and the Azure disk volume driver. You can create and manage your SQL Server instances natively in Kubernetes. For additional high availability, you can use a StatefulSet. This article includes Kubernetes specs on how to deploy SQL Server on Kubernetes cluster running on Azure Container Service and how to use a StatefulSet to achieve a high availability configuration similar to shared disk failover cluster instance. In this configuration, Kubernetes plays the role of the cluster orchestrator. Upon a failure of SQL Server instance running in a container, the orchestrator bootstraps another instance of the container that attaches to the same persistent storage, which maps to Azure disk.

-

An Azure Container Service (AKS) cluster.

If you are not familiar with AKS clusters, you can follow the instructions on Deploy an Azure Container Service (AKS) cluster to create an AKS cluster.

Configure a persistent volume, and persistent volume claim in the Kubernetes cluster. For background on Kuberntes storage, see Persistent Volumes. Complete the following steps:

-

Create a manifest to define the storage class and the persistent volume claim. The manifest specifies the storage provisionioner, paramaters and the reclaim policy. The Kubernetes cluster will use this manifest to create the persistent storage.

The following yaml example defines a storage class and persistent volume claim. The storage class is named

azure-diskand the persistent volume claim is namedmssql-data. The persistent volume claim metadata includes an annotation connecting it back to the the storage class.kind: StorageClass apiVersion: storage.k8s.io/v1beta1 metadata: name: azure-disk provisioner: kubernetes.io/azure-disk parameters: storageaccounttype: Standard_LRS kind: Managed --- kind: PersistentVolumeClaim apiVersion: v1 metadata: name: mssql-data annotations: volume.beta.kubernetes.io/storage-class: azure-disk spec: accessModes: - ReadWriteOnce resources: requests: storage: 8Gi

Save the file, for example pvc.yml.

-

Create the persistent volume claim in Kubernetes.

kubectl apply -f <Path to PVC.yaml file><Path to PVC.yaml file>- The location where you saved the file.

The persistent volume is automatically created as an Azure storage account, and bound to the persistent volume claim.

-

Verify the persistent volume claim.

kubectl describe pvc <PersistentVolumeClaim><PersistentVolumeClaim>- The name of the persistent volume claim.

In the preceding step, the persistent volume claim is named

mssql-data. To see the metadata about the persistent volume claim, run the following command.kubectl describe pvc mssql-dataThe returned metadata includes a value called

Volume. This value maps to the name of the blob.Note the value for volume in the command prompt image above, matches part of the name of the blob in the Azure portal image below.

-

Verify the persistent volume.

kubectl describe pvkubectlreturns metadata about the persistent volume that was automatically created and bound to the persistent volume claim.

Create a secret in the Kubernetes cluster to store the SA password for SQL Server.

-

Create a yaml file for for the secret that includes the SA password.

apiVersion: v1 kind: Secret metadata: name: mssql type: Opaque data: SA_PASSWORD: UEBzc3dvcmQxMg==

<ComplexPassword>- The password you will use for the SA account.

Save the file as

secret.yaml. -

Create the secret in the Kubernetes cluster.

kubectl apply -f <Path to secret.yaml file>

For additional information about secrets in Kubernetes, see Secrets.

In this example, the SQL Server container is described as a Kubernetes deployment object. In this step, create a manifest to describe the container based on the Microsoft SQL Server mssql-server-linux image. The manifest references the mssql-server persistent volume claim, and the mssql secret which you already applied to the Kubernetes cluster.

-

Create a yaml file describing the deployment. The following example describes a stateful set including a container based on the SQL Server container image.

--- apiVersion: apps/v1beta1 kind: Deployment metadata: name: mssql-deployment spec:

replicas: 1

template:

metadata:

labels:

app: mssql

spec:

containers:

- name: mssql

image: microsoft/mssql-server-linux

env:

- name: ACCEPT_EULA

value: "Y"

- name: MSSQL_PID

value: "Developer"

- name: SA_PASSWORD

valueFrom:

secretKeyRef:

name: mssql

key: SA_PASSWORD

volumeMounts:

- name: mssqldb

mountPath: /var/opt/mssql

volumes:

- name: mssqldb

persistentVolumeClaim:

claimName: mssql-data

apiVersion: v1 kind: Service metadata: name: mssql-loadbalancer spec: selector: app: mssql ports: - protocol: TCP port: 1433 targetPort: 1433 type: LoadBalancer

Copy the preceding code into a new file, named `sqldeployment.yaml`. Update the following values.

* `value: "Developer"`

* Sets the container to run SQL Server developer edition. If it is not for production data, you can use `Developer`. If it is for production use, select the appropriate edition. Can be one of `Enterprise`, `Standard`, or `Express`.

>[!NOTE]

>For more information, see [How to license SQL Server](http://www.microsoft.com/sql-server/sql-server-2017-pricing).

* `persistentVolumeClaim`

* This value requires an entry for `claimName:` that maps to the name used for the persistent volume claim. This article uses `mssql-data`.

>[!NOTE]

>By using the `LoadBalancer` service type, the SQL Server container is accessible remotely (via the internet) at port 1433.

Save the file, for example **sqldeployment.yaml**.

1. Create the stateful set.

```azurecli

kubectl apply -f <Path to sqldeployment.yaml file>

<Path to sqldeployment.yaml file>- `The location where you saved the file.

The stateful set is created, with SQL Server running as a pod in the kubernetes cluster with connection to persistent storage.

-

Verify the services are running. Run the following command:

kubectl get servicesNote the IP address for the SQL Server container.

If you configured the container as described, you can connect with SSMS from outside of the Azure virtual network. To access via SSMS, use the external IP Address. If needed, supply the port of the instance. For example, 1433.

To verify failure and recovery you can delete the pod. Do the following steps:

-

List the pod running SQL Server.

kubectl get podsNote the name of the pod running SQL Server.

-

Delete the pod.

kubectl delete pod mssql-statefulset-0Note that

mssql-statefulset-0is the value returned from the previous step for pod name.

Kubernetes will automatically recreate the pod to recover a SQL Server container and connect to the persistent storage.

In this tutorial, you learned how to

[!div class="checklist"]

- Install kubectl

- Set up the cluster

- Configure storage

- Create a stateful set

- Connect to the container with SSMS

- Verify failure and recovery

[!div class="nextstepaction"] Intro - Kubernetes